Socials

Open source tools and practices in state-of-the-art DL research

Appropriate tooling is an inevitable part of the SOTA deep learning research. Recent advancements in the field resulted in the proliferation of large frameworks’ ecosystems (TensorFlow, PyTorch, MXNet) as well as smaller targeted tools that serve specific needs. This social event is centered around all the tools and practices, big and small, that are relevant to the ICLR community. Participants are encouraged to show their own open source tools that they build, regardless of their size and proliferation level. It can be a single CLI command that you find useful or an entire framework. Main goal for the participants is to introduce them to the wide range of tools and practices that may result in faster research progression and standardization.

Generative ML for Generalization

Deep Generative Models (DGM) have become spectacularly popular even outside the research circles. Despite their success, we do not see deeper insights on their usage as a tool for generalization (i.e. better representations via data enhancement, augmentation etc). Since ICLR does not have a workshop on DGM, this event will provide a platform for the enthusiast community.

Tuesday (16:00-18:00 GMT) [Live]

Anticipating Risky Research

In 2019, OpenAI adopted a staged release approach for their advanced language model GPT-2, citing concerns around potential misuses of the model. Earlier this year, NeurIPS announced a submission requirement for authors to include a broader impact statement about possible consequences of their research. As ML becomes increasingly advanced and ubiquitous, we are challenged with anticipating the consequences of our research and mitigating risks of malicious use, harmful applications, unintended consequences, and accidents. But while ML researchers may experts in their own domain, how can they accurately predict the economic, political, and sociological impacts of their work, especially when they might be second or third order effects? Should risk screening be the researcher's responsibility? If not theirs, whose? What structural incentives are at play that affect our ability to publish responsibly? How can we improve publication practices in the field to maximize the benefits of research while mitigating risks? Join us for a discussion on these questions and more, hosted by the Partnership on AI. All levels of familiarity/experience welcome!

Learning Representation for Cybersecurity

Four broad applications of ML in Cybersecurity are related to Malware Detection, Anomaly Detection, User Behavior Modeling, and Adversarial ML. The ICLR research community continues to produce exciting work that can be of relevance to both researchers and practitioners in Cybersecurity. E.g., ideas from Network Representation Learning can be applied to Cyber Terrain graphs, that capture complex relationship between connected devices, to develop robust approaches for detecting insider threat and compromised assets. Similarly, learning low dimensional representations of the large volume and variety of data generated by executing different types of malware in a sandbox experiment can lead to better malware detection algorithms. It is also worth probing how to adapt the Adversarial ML work done in the domain of Computer Vision for Cybersecurity problems.

Internships and opportunities for students at Amazon

WiML Virtual Panel and Mentoring Session

The WiML Virtual Social will be a session on Navigating Covid-19 (Our New Realities). The novel COVID-19 virus has impacted our lives in several ways: from how we work, what we do, how we live, to how we communicate with friends and family. This interactive session will look at the ways in which we have adapted and how we carry out our work in the face of this new reality. It will also showcase some of the research and innovations being developed around the coronavirus.

Thursday (16:00-18:00 GMT) [Live]

LatinX in AI Social

Virtual gathering of LatinX in AI Members with an hour of invited talks from members of our community whom we are recognizing for their efforts in response to the COVID-19 Pandemic. See our webpage: www.latinxinai.org/iclr-2020

Monday (19:00-22:00 GMT) [Live]

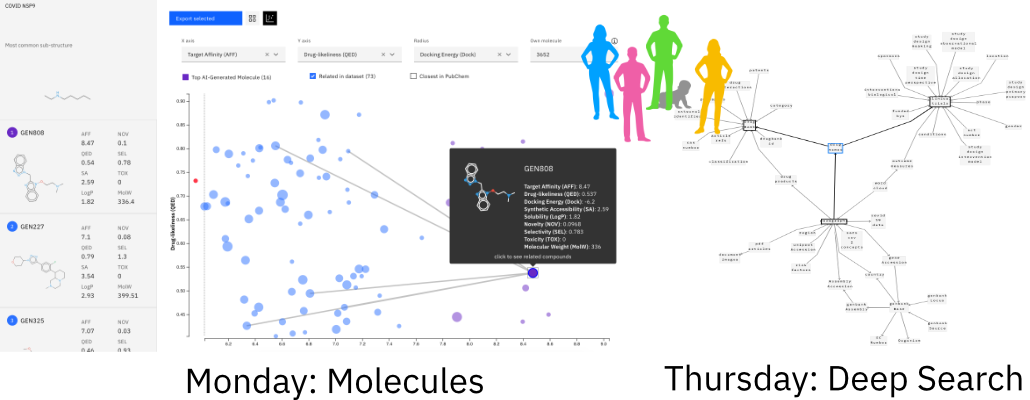

What can AI Researchers do to fight against COVID-19?

The COVID-19 coronavirus presents an unprecedented, global challenge. When citizens are doing their part by social distancing, healthcare workers and first responders are taking care of many of us, and our governments are mobilizing resources to respond to this crisis, we’ve asked ourselves what can we do at IBM, and more generally, what can the AI community contribute? In this social event, we will provide an overview of the AI tools IBM offered to help the research community fight against COVID-19. We will open the floor and use this opportunity to brainstorm novel ideas together with ICLR participants. There will be two sessions with focus on molecules (Monday) and on deep search (Thursday).

Climate and Sustainability Startups

Lapsed Physicists Wine and Cheese

'Lapsed' (aka. Former) Physicists are plentiful in the machine learning community. Inspired by Wine and Cheese seminars at many institutions, this BYOWC (Bring Your Own Wine and Cheese) event is an informal opportunity to connect with members of the community. Hear how others made the transition between fields. Discuss how your physics training prepared you to switch fields, what synergies between physics and machine learning excite you the most. Share your favorite physics jokes your computer science colleagues don't get, and just meet other cool people. Open to everyone, not only physicists; you'll just have to tolerate our humor. Wine and Cheese encouraged, but not required.

QueerInAI Social

Relaxed virtual social to meet new people, exchange experiences and research concerning queer scientists in AI/ML, and to promote the visibility and perspective of queer people in the context of AI/ML research and applications. queerinai.org

BlackInAI Meet-Up

Black in AI is a place for sharing ideas, fostering collaborations and discussing initiatives to increase the presence of Black people in the field of Artificial Intelligence. If you are in AI and either self-identify as Black or an ally, let's meet-up at the ICLR'20 and discuss interests, challenges, opportunities, collaborations and other related issues.

ML Researchers in/interested in Korea

We invite everyone who is part of and/or interested in the ML research scene in Korea. Participants can introduce their own ML research, especially if it's part of ICLR 2020. They can also introduce ICLR 2020 papers that they find interesting and discuss them with other participants. Other possible discussion topics include (but are not limited to): Korean NLP, computer vision and datasets, ML research for COVID-19 and other healthcare problems, and career options in academia/industry in Korea. We welcome everyone from anywhere in the world, as long as you can keep awake if our event falls in the middle of the night for you.

Wednesday/Thursday (01:00-03:00 GMT) [Live]

The RL Social

A sequence of randomly formed small group discussions centered around Reinforcement Learning. After the success of the NeurIPS RL social we hope to bring a similar event to ICLR, where participants will get opportunities to discuss a wide variety of topics with new people. This will be accomplished through the zoom breakout room feature, with 20 minutes per group discussion. Each group can create and discuss their own topic, or choose a topic from our curated list sourced from the ICLR conference goers!

Wednesday (18:00-20:00 GMT) [Live]

Embodied Intelligence Agora

We will discuss the role of embodiment for developing generalizable representations. This social will be composed of multiple breakout rooms, each hosted by a senior researcher in the field who will moderate the conversation and share their thoughts. The discussion topic in each room will be dynamically determined by the participants, and may change along with the conversation. Conversation topics in the breakout rooms may include questions like When should we learn on a real robot?, How to evaluate Model-Based RL methods?, How do we learn third-person imitation and movement analogies?, What can we learn about learning from motor development?, How should we represent tactile information?. This social will be an opportunity to engage with members of the community who care about robotics and embodied intelligence. We aim to create an inclusive space to share early ideas, with the hope of inspiring novel research and fostering new collaborations.

Thursday (14:00-16:00 GMT) [Live]

Amii Fellows – Meet & Greet

Research with 🤗 Transformers

Many academic groups are building wrapper software on top of Transformers to run experiments, for example jiant.info, but it's still very much a work in progress. This social is about collaborating and reworking our goals to better fit with the open-source ecosystem. More broadly, though, we're also just interested in what general techniques/ideas/tools people find useful.

Thursday (20:00-22:00 GMT) [Live]